Kinect Augmented Reality – Part 2

Following on from Kinect Augmented Reality – Part 1 we are now exploring getting the Kinects depth sensor data into our browser.

Due to the sheer amount of data coming from the Kinect, using WebSockets is the only viable option for us. There are a fair few examples of this that can be found, however all of them are very old and generally don’t work on current setups due to old dependencies. I found an old Kinect WebSockets Python script which uses libfreenect’s Python wrapper. The script didn’t work in its current form, but after re-writing a fair bit of it I was able to get data from the Kinect into the browser.

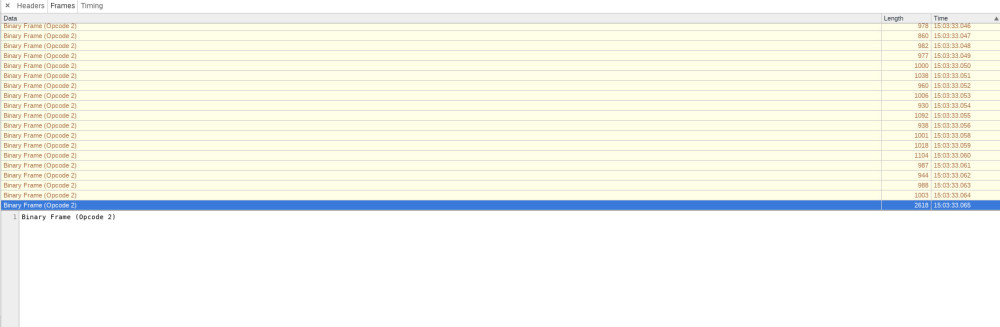

The data is first compressed into a binary format using PyLZMA before being sent over WebSockets. This is then decompressed within JavaScript using the LZMA library decompress function. Once decompressed we’re then able to create a particle system within three.js to display our depth stream live within the browser as shown below.

Whilst we’re still quite far away from our sandbox visualisation, we’ve got quite a lot of groundwork done here. We’ve got data coming out the Kinect into our browser, and we’re displaying this within three.js. However, for the sandbox visualisation, we need at least two main things:

1: Contour Lines

2: Colouring of the bands

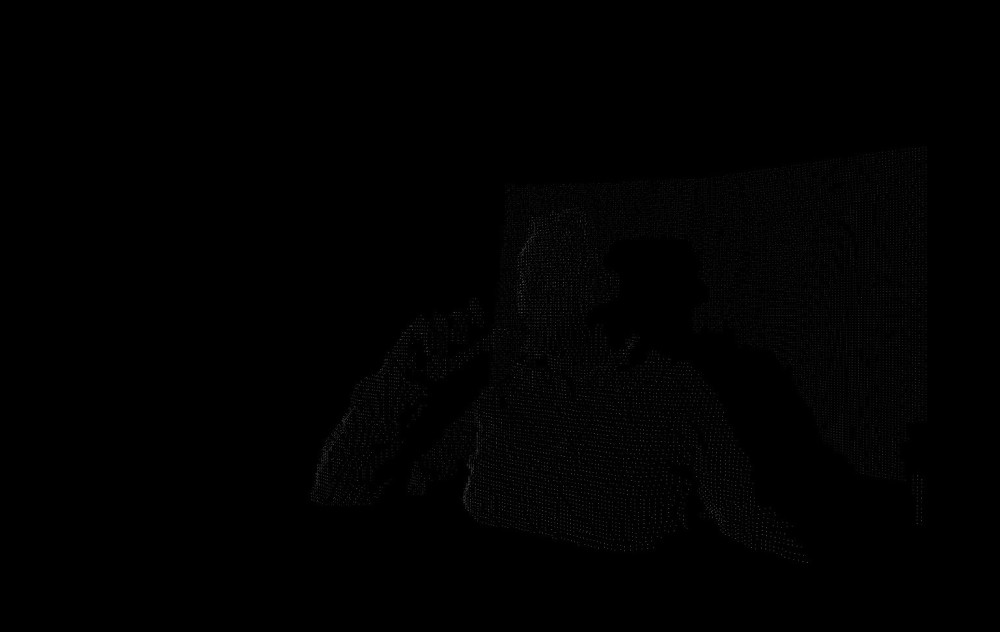

What I wanted to try next is to colour the particles based on their depth to get a feel of colouring the bands. This was quite easily achieved using the brilliant chroma.js library.

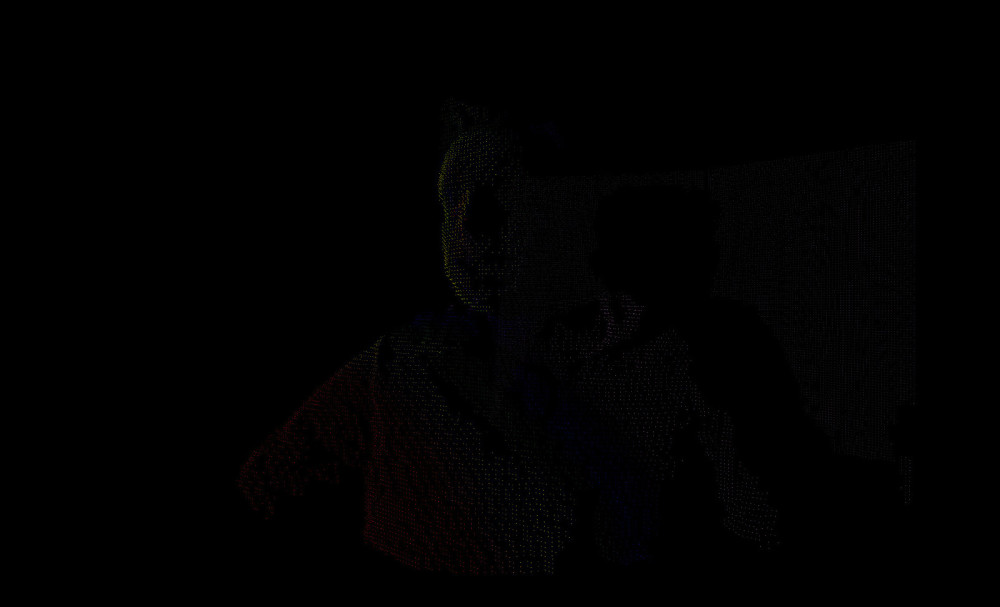

The sandbox projection is going to be 2D, so there’s no need for placing particles in different depths as such, and there’s no application in colouring the particles either, but it’s a nice thing to try. I now wanted to turn our 3D scene into a 2D one as a step towards our goal, so I removed the adjustment of each particles z coordinate, and we’re left with a flat 2D visualisation with the particles coloured to their depth value.

Join me for part 3 where we look at drawing contours using our data points.